|

I am a Ph.D. student in Computer Science at Columbia University, advised by Yunzhu Li. I'm broadly interested in robotics, computer vision, physics simulation, and machine learning. My research explores topics such as digital twins, dynamics modeling, and real-to-sim-to-real robot learning. My research is partially supported by the Qualcomm Innovation Fellowship. Before Columbia, I received my Bachelor's degree from Tsinghua University (Yao Class). I am fortunate to receive mentorship from Prof. Kris Hauser during my Ph.D. study, and Prof. Xiaolong Wang, Prof. Yang Gao, Prof. Li Yi during my undergrad. |

|

|

* indicates equal contribution. Representative papers are highlighted. |

|

Kaifeng Zhang*, Shuo Sha*, Hanxiao Jiang, Matthew Loper, Hyunjong Song, Guangyan Cai, Zhuo Xu, Xiaochen Hu, Changxi Zheng, Yunzhu Li ICRA, 2026 CVPR 2026 4DV Workshop (Oral Presentation) website / arXiv / pdf / code We propose a framework for robot policy evaluation in simulation environments, using Gaussian Splatting for rendering and soft-body digital twin for dynamics. |

|

Heng Zhang*, Gehan Zheng*, Kaifeng Zhang, Hyunjong Song, Shivansh Patel, Xiaochen Hu, Yunzhu Li, Changxi Zheng, Peter Yichen Chen IROS 2025 RoDGE Workshop We present an interactive digital twin construction (real-to-sim) framework that learns the full dynamics of elastoplastic articulated objects from videos. |

|

Hanxiao Jiang, Hao-Yu Hsu, Kaifeng Zhang, Hsin-Ni Yu, Shenlong Wang, Yunzhu Li ICCV, 2025 website / arXiv / pdf / code We optimize a spring-mass physics model of deformable objects and integrate the model with 3D Gaussian Splatting for real-time re-simulation with rendering. |

|

Kaifeng Zhang, Baoyu Li, Kris Hauser, Yunzhu Li RSS, 2025 website / arXiv / pdf / code / demo We propose a neural particle-grid model for training dynamics model with real-world sparse-view RGB-D videos, enabling high-quality future prediction and rendering. |

|

Mingtong Zhang*, Kaifeng Zhang*, Yunzhu Li CoRL, 2024 website / arXiv / pdf / code / demo We learn neural dynamics models of objects from real perception data and combine the learned model with 3D Gaussian Splatting for action-conditioned predictive rendering. |

|

Kaifeng Zhang*, Baoyu Li*, Kris Hauser, Yunzhu Li RSS, 2024 ICRA 2024 RMDO Workshop (Best Abstract Award) website / arXiv / pdf / code We learn a material-conditioned neural dynamics model using graph neural network to enable predictive modeling of diverse real-world objects and achieve efficient manipulation via model-based planning. |

|

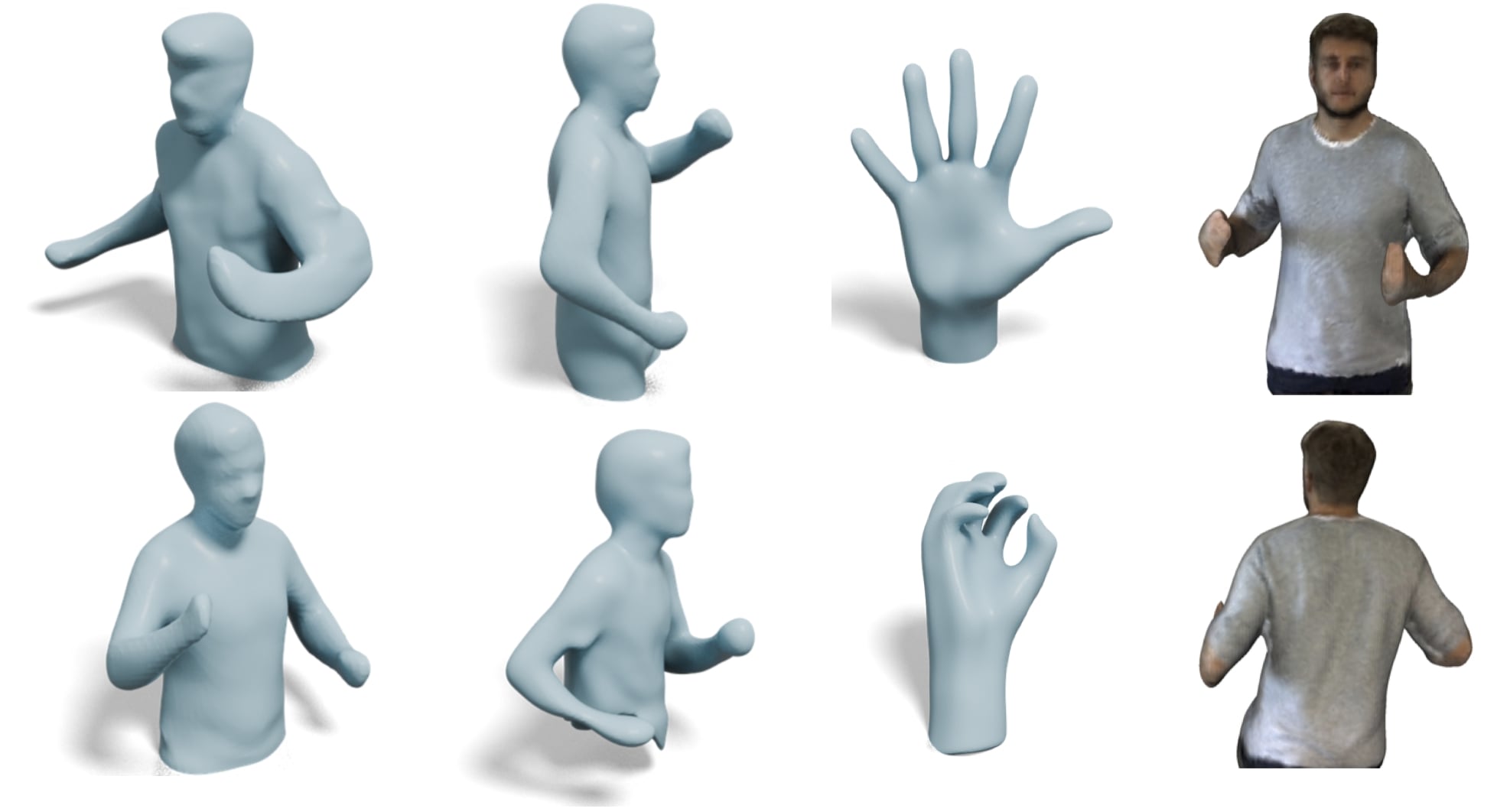

Xiaoyan Cong, Haitao Yang, Liyan Chen, Kaifeng Zhang, Li Yi, Chandrajit Bajaj, Qixing Huang CVM, 2026 We achieve 4D neural implicit reconstruction from only a single-view scan using deformation and topology regularizations. |

|

Kaifeng Zhang, Yang Fu, Shubhankar Borse, Hong Cai, Fatih Porikli, Xiaolong Wang ICLR, 2023 website / arXiv / pdf / code We propose a fully self-supervised method for category-level 6D object pose estimation by learning dense 2D-3D geometric correspondences. Our method can train on image collections without any 3D annotations. |

|

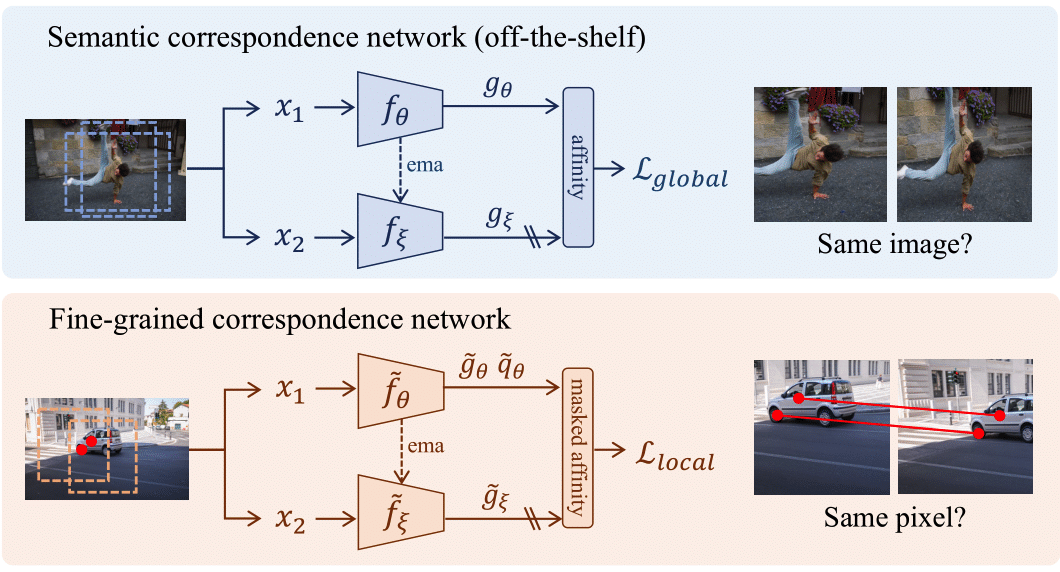

Yingdong Hu, Renhao Wang, Kaifeng Zhang, Yang Gao ECCV, 2022 (Oral Presentation) arXiv / pdf / code We show that fusing fine-grained features learned with low-level contrastive objectives and semantic features from image-level objectives can improve SSL pretraining. |

|

Template borrowed from Jon Barron |